Google and music instruments did not fit together for some time. Especially when comparing Android and iOS music making apps on the market. The result is all known: iOS is so far the best mobile operation system for musicians. But Google can do it also differently.

In a current project, Google work together with Magneta, a research project that try to explore the role of machine learning in the process of creating art und music. During this collaboration, NSYNTH SUPER was developed, a new open source hardware Synthesizer based on machine learning.

How It Works?

The engine is based on a complex neural network that can learn the characteristics of sounds and create entirely new sounds based on these characteristics. Developers recorded here samples from different acoustic and electronic instruments (drums, guitar, Synthesizer) and put them in the engine. NSYNTH SUPER created with the help of the deep neural network instantly new sound timbres. It’s not a sample player but the new developed algorithm analyse the entire character of the sound and create from this source a complete new timbre.

During the development phase, 16 different sounds were recorded in a range of 15 pitches and fed into the algorithm. The result was over 100,000 new sounds. So you can imagine how versatile the NSYTH SUPER is. Rather than combining or blending the sounds, NSYNTH synthesizes an entirely new sound using the acoustic qualities of the original sounds—so you could get a sound that’s part flute and part sitar all at once. Using an autoencoder, it extracts 16 defining temporal features from each input. These features are then interpolated linearly to create new embeddings (mathematical representations of each sound). These new embeddings are then decoded into new sounds, which have the acoustic qualities of both inputs.

The newly developed interface also makes a very exciting impression in my opinion. The developers installed here various knobs as well as a touch display. Each dial was assigned 4 source sounds. Using the dials, musicians can select the source sounds they would like to explore between, and drag their finger across the touchscreen to navigate the new sounds which combine the acoustic qualities of the 4 source sounds.

The Idea Seems Familiar To Me

The idea of NSYNTH SUPER looks very exciting, but I feel a bit familiar. I can be wrong myself but it reminds me a lot of the Eisenberg Audio Einklang Synthesizer based on the same principle. Samples are analyzed with an algorithm and then made accessible to the user as a timbre in an engine. Only here it never knew how the whole engine works.

How Can I Get An NSYNTH SUPER?

All the technology and design used to create NSYNTH SUPER is available as an open source project.

Like all Magenta projects, NSynth Super is built using open source libraries, including TensorFlow and openFrameworks, to enable a wider community of artists, coders, and researchers to experiment with machine learning in their creative process. The open source version of the NSynth Super prototype including all of the source code, schematics, and design templates are available for download on GitHub.

Don’t Have The Knowledge To Build A NSYNTH SUPER? No Problem

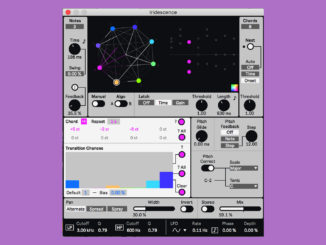

The developers also include a nice Max For Live device of the NSYNTH SUPER that includes the engine in a easy-to-use interface. You download it here: NSYNTH for M4L

More information here: NYSNTH SUPER

Be the first to comment